Quality/Quality/en

What is the quality landscape in which Wikimedia operates?

The Wikimedia approach to quality

As Ed Chi and his colleagues at PARC summarize Wikimedia's approach to quality: because anyone can edit anything, and can examine its edit history, it will (or already has) become reliable.

He then goes on to point out that this innovative and socially transparent process is actually very similar to well-established academic processes for ascertaining the truth:

- Anyone can examine and question

- Incorrect information can be corrected

- Disputed points of view can be listed side by side

There is much debate, however, about the best way to measure the outcomes and success of this approach to quality. The list of potential quality criteria for the types of content included in Wikimedia projects is long, and includes:

- Accurate

- Credible

- Complete

- Neutral

- Relevant

And getting to an "answer" about Wikimedia's perceived and actual quality is complicated by Wikipedia's size and popularity. As Simson Garfinkelargues, Wikipedia's size and popular presence, combined with its standards for inclusion, have created a new framework for thinking about the "truth" of the information Wikimedia projects contain. In a recent Technology Reviewarticle, he explains this further by stating that, "Wikipedia's standard for inclusion has become its de facto standard for truth, and since Wikipedia is the most widely read online reference on the planet, it's the standard of truth that most people are implicitly using when they type a search term into Google or Yahoo. On Wikipedia, truth is received truth: the consensus view of a subject." [1]

Core content policies

The above is a direct reference to Wikipedia's three core content policies, which determine the type of quality of content that is acceptable in the main namespace:

- Verifiability. As stated on Wikipedia: Verifiability, "The threshold for inclusion in Wikipedia is verifiability, not truth - that is, whether readers are able to check that material added to Wikipedia has already been published by a reliable source, not whether we think it is true". Reliable sources are defined, in general, as "peer-reviewed journals and books published in university presses; university-level textbooks; magazines, journals, and books published by respected publishing houses; and mainstream newspapers. Electronic media may also be used. As a rule of thumb, the greater the degree of scrutiny involved in checking facts, analyzing legal issues, and scrutinizing the evidence and arguments of a particular work, the more reliable the source is".

- Neutral point of view: Wikipedia: Neutral point of view states that, "All Wikipedia articles and other encyclopedic content must be written from a neutral point of view, representing fairly, and as far as possible without bias, all significant views that have been published by reliable sources".

- No original research. Here, Wikipedia: No original research states that "Wikipedia does not publish original research or original thought. This includes unpublished facts, arguments, speculation, and ideas; and any unpublished analysis or synthesis of published material that serves to advance a position. This means that Wikipedia is not the place to publish your own opinions, experiences, arguments, or conclusions".

One quality bar, or many?

It also seems important to raise the question of whether it is even possible to apply one quality definition to all content. Two arguments seem to present themselves:

- Quality is entirely objective, and all Wikimedia content can and should be measured against the same quality bar

- The quality bar for content varies, depending on factors such as user, purpose, and category/topic (e.g. the standards for a particular field)

Are there other arguments that could be made here? What evidence can be provided in support of, or against, one argument or the other?

What is Wikimedia's current position in this quality landscape?

Overview of quality arguments and criticisms

Simple statistics would seem to validate the fact that these core policies are successful, and that the user community perceives Wikipedia to be of high enough quality:

(data to be filled in)

- X million volunteers have contributed over YY million edits to Z million articles

- English Wikipedia is currently positioned as the XX most visited site on the internet and serves an average of XX,000 requests per second

- “Wikipedia trades authority for accessibility, breadth, and speed. It usually costs money to access a professionally edited encyclopedia. However, Wikipedia is available free of charge. Users who have a casual interest in a subject may find the ease with which they can access Wikipedia to be worth the risk of occasionally being misled” [2]

However, some argue that the perception of any quality issues in Wikipedia undermines the entire project, regardless of actual quality. At a high level, the logic of this argument states that, even though Wikipedia frequently contains good information, it can also contain bad information. Because users have no way of telling the difference, they have no idea what to expect, and they can’t rely on any information.

The following criticisms of Wikipedia’s actual quality come from the Citizendium website, which claims that Wikipedia has the following quality issues:

- Too many articles are written amateurishly

- Too many articles are mere disconnected grab-bags of factoids, not made coherent by any sort of narrative

- In some field and topics groups “squat” on topics in order to make them reflect a certain bias

- When experts add obsessive amounts of detail to articles, this can make Wikipedia difficult to read and impossible to verify

- Lack of a credible mechanism to approve article versions

- Vandalism made possible because of anonymous contribution

- The people with the most influence are those with the most time, not the most knowledge

Data and research on Wikimedia's actual quality

Featured and good articles

An overview of Featured and Good articles can be found here and here

Below is data on the growth of featured and good articles over time. In general, the number of these reviewed articles appears to have not been able to make a dent relative to the growth in the overall number of articles:

It is also possible to do a bit of a deep dive and look at the quality of articles that are considered "vital" according to Wikipedia:Vital articles:

A version of this data is directly referenced in one of the proposals that has been submitted as part of the strategic planning process:

"Wikipedia, at last count, had 2,993,967 articles. This is a staggering number and testiment to the work of many people, however it betrays the fact that we have yet to raise the vital articles, articles that every encyclopedia needs, to a consistent quality. Of the level 1 vital articles, only 1 has reached even "Good article" status. At level 2 the ratio becomes arguably worse.

I think we owe it to ourselves and the status of Wikipedia as a respectable source of information, to improve the vital articles to a respectable level, even at the neglect of other articles. As much as it may be unpopular, I cannot think of a better way to improve the respectibility and impact of Wikipedia than this."

Vandalism

A WikiProject on vandalism studies can be found here

Below are highlights from recent analysis around the prevalence of vandalism in Wikipedia articles:

- "The Collaborative Organization of Knowledge" (2008) - A study by Diomidis Spinellis and Panagiotis Louridas that found that "4% of article revisions were tagged in descriptive comment as 'reverts' - a typical response to vandalism. They occured an average of 13 hours after their preceding change. Looking for articles with at least one revert comment, 11% of Wikipedia articles have been vandalized at least once" [3]

- New analysis posted on foundation-l that found:

- Focusing on just this year, vandalism was present .21% of the time (.27% over the entire history of Wikipedia)

- The time distribution of vandalism has a long tail; the median time to revert is 6.7 minutes with a mean time to revert of 18.2 hours (one revert in the sample went back 45 months)

- Nearly 50% of reverts occur in 5 minutes or less

- However, the 5% of vandalism that persists longer than 35 hours is responsible for 90% of the vandalism a visitor is likely to encounter at random

More summary data is here

The original foundation-l posting is here

If 4% of article revisions are vandalism, but only .27% of articles contain vandalism at any point in time, does that mean that a small subset of articles contain multiple instances of vandalism?

Quality by subject/category

Working list of research comparing Wikipedia's content to that of alternative providers:

- Reference Services Review (2008) – “Comparison of Wikipedia and other encyclopedias for accuracy, breadth, and depth in historical articles” [4]

An analysis of nine Wikipedia historical articles against comparable articles in Encyclopedia Britannica, the Dictionary of American History, and American National Biography Online. Articles were compared in terms of depth, accuracy, and detail.

While admittedly limited in scope, “The study did reveal inaccuracies in eight of the nine entries and exposed major flaws in at least two of the nine Wikipedia articles. Overall, Wikipedia’s accuracy rate was 80 percent compared with 95-96 percent accuracy within the other sources. This study does support the claim that Wikipedia is less reliable than other reference resources. Furthermore, the research found at least five unattributed direct quotations and verbatim text from other sources with no citations”

- PC Pro (2007) – Wikipedia Uncovered: Wikipedia vs. The Old Guard [5]

In an effort to compare Wikipedia with Britannica.com and Encarta, PC Pro asked "three experts to evaluate articles pertaining to their field, to give us an unscientific snapshot of the competing sources".

Articles were evaluated in History, Chemistry, and Geology. Out of the four articles, Wikipedia came out on top once (a Chemistry article on Atherosclerosis). What is potentially most telling is the extent to which comments on the Wikipedia articles varied by subject and topic, including:

- “More of a benefit to the serious student than Encarta or Britannica”

- “Largely sound on the facts”

- “Good for the bare facts but didn’t read very well”

- “Marked deterioration towards the end”

- The Denver Post (2007) – “Grading Wikipedia”

- Nature (2005) – Internet Encyclopedias go head to head

Additional data/research

Working list of additional research into the quality of Wikimedia content:

- “Comparing featured article groups and revision patterns correlations in Wikipedia” (2009)

- “Automatically Assessing the Quality of Wikipedia Articles” (2008)

- “Assessing the value of cooperation in Wikipedia” (2007)

- “The Quality of Open Source Production: Zealots and Good Samaritans in the Case of Wikipedia” (2007)

- “An empirical examination of Wikipedia’s credibility” (2006)

- Have you seen the page at w:Wikipedia:External peer review? --Philippe 02:44, 31 July 2009 (UTC)

What quality control/assurance initiatives are already in place, or are being tested by Wikimedia and the community?

Overview of current quality control initiatives

Wikimedia and the Wikimedia community currently have, or are experimenting with, several quality control/assurance initiatives:

- Bots

- Dedicated vandalism volunteers

- Internal peer review (featured and good articles)

- External peer review

- WikiDashboard(Ed Chi and his colleagues at PARC)

- Flagged revisions

Related data and analysis

Flagged revisions

Compliments of User:Voice of all, here is a link to data showing review times for anon edits to the de Wikipedia from 1/1/09 to 1/6/09:

What quality control/assurance initiatives could Wikimedia consider to improve actual and perceived quality?

Researchers, community members, and alternative providers suggest that Wikipedia has a wide range of options for improving actual and perceived quality. A working list of initiatives that have been proposed has been started below, roughly organized according to alignment with the current Wikimedia model:

- Providing visual cues to help users differentiate content that is likely to be accurate from content that is likely to be inaccurate (e.g. color-coding text based on venerability)

- Expanding flagged revisions to other language Wikipedias

- Allowing different versions of articles on the same subject

- Moving to a central editing process

- Creating separate stable and experimental Wikipedias

How alternative providers are utilizing these approaches

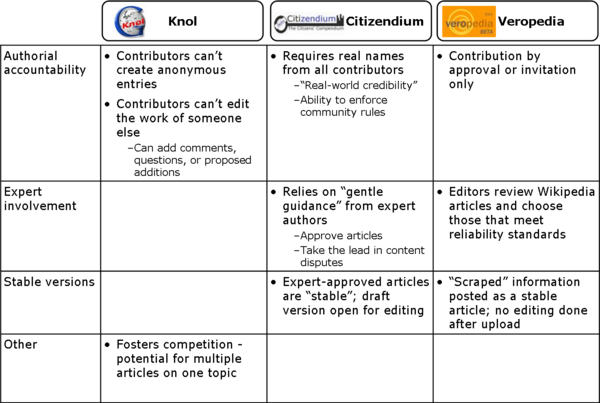

Alternative providers of Wikimedia-type content have adopted variations of many of these initiatives. Information about this has begun to be catalogued here (from provider websites):

What is the potential impact of these quality control/assurance initiatives?

Where are the most salient intersections between content and quality?

Looking for input

- What other frameworks exist for thinking about the quality of Wikimedia's content? What criteria are most important to consider?

- What other research and data is available regarding the quality (or quality problems) of Wikimedia content?

- What other initiatives have already been tried or discussed? What data is available to demonstrate the success/limitations of these initiatives?

Notes

- ↑ Garfinkel, Simson L., "Wikipedia and the Meaning of Truth", Technology Review, November/December 2008. http://www.technologyreview.com/web/21558/?a=f

- ↑ Cross, Tom, "Puppy smoothies: Improving the reliability of open, collaborative wikis" http://outreach.lib.uic.edu/www/issues/issue11_9/cross/index.html

- ↑ Spinellis and Louridas, "The Collaborative Organization of Knowledge", Communications of the ACM, August 2008 [1]

- ↑ [2]

- ↑ [3]